4. Math Bootcamp

Introduction

Here we’ll first review basic vector calculus: grad, div, curl, Gauss’ theorem, Stokes’ theorem and their variants. This is essential material for graduate E&M! Another crucial tool for understanding these fields is the Fourier transform, which also introduces in a natural way Dirac’s indispensable delta function, discussed at the end of the lecture.

Vectors: Cartesian Coordinates, Kronecker Delta

In E&M (in contrast to, say, QM) our vectors are not in an abstract space, they’re in three dimensions.

The essential properties of a linear vector space are that addition and multiplication by a scalar (a number) are defined and give another vector in the space.

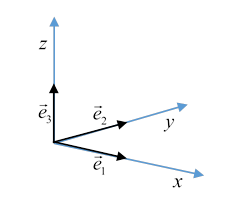

Our ordinary three-dimensional space is spanned by three orthonormal basis vectors, the unit vectors along the axes, often written .

For notational efficiency, it is often convenient to write these unit vectors and their mutual orthogonality

this is called the Kronecker delta: it’s equal to 1 if , zero if .

Dummy Suffixes

Any 3D vector is a sum over componentsthe basis vectors are said to “span the space”:

where we’ve introduced Einstein’s dummy suffix notation in that last step:

If a suffix appears twice in an expression, the sum over 1,2,3 is understood.

If the same suffix appears more than twice, you made a mistakethe expression is meaningless.

The position vector

becomes

(It is also often written )

The magnitude of the vector is written

(You may be aware that in general relativity, this would be written and summation is only allowed between up and down indices. This refinement is unnecessary in E&M in ordinary space, that is, not gravitationally warped space).

Rotating a Displacement Vector, Orthogonal Matrices

Consider the incremental displacement vector

We know how this transforms under a rotation: for example, a rotation of the displacement vector about the axis gives new components in the fixed frame of reference:

.

(One way to see this is to write as a sum of three vectors, each along an axis, then rotate them separately, and write down their new components, then add.)

However, confusingly, a more common transformation is to find the new components of a fixed vector in a new frame of reference, which is, say, the old frame of reference rotated by about the axis. This is of course

(It's worth mentally checking for a small angle to be sure you've got this sign right.)

This is an orthogonal matrix: it transforms orthogonal vectors into orthogonal vectors, its transpose is its inverse, obvious from the form above.

Since a general rotation can be written as a succession of rotations around axes, for any rotation.

Exercise: Prove that if then

We’ll write the general rotation matrix as

Regarding the displacements as infinitesimals, we see that the transformation can be written

and of course from the chain rule,

From this, we can deduce that for a rotational change of basis, the differential operator transforms as

We’ll return to this soon.

Definition of a Vector

It’s not just those three numbersa vector isn’t given just by specifying its components in one frame of reference, there has to be a prescription for finding its components in any frame of reference.

So we define a vector having components in one frame and by requiring that the components in a new frame are given by exactly the same linear transformation that gives the components of a displacement vector in the new frame in terms of the components in the original frame, that is, as discussed above,

Hence by definition, under rotation the components of a vector transform by

Definition of a Tensor

For future reference, we'll mention here what are called Cartesian tensors. The vector has the single suffix as in a tensor has two or more suffixes, hence 9, 27, etc. components in three dimensions.

Each component transforms exactly as does the singlesuffix vector, meaning that under rotation, when for a vector for a second-rank (two suffixes) tensor

Each suffix transforms by the vector rule. We’ll soon come across important tensors.

Finally, it’s worth asking: what are the eigenvectors of a rotation matrix? Let’s look at the one above, for rotation about the axis. Obviously, the only vector unaffected is one in the direction! But it’s a 3X3 matrix, so two other vectors must be just multiplied by a numberthe eigenvalueand stay pointing in the same direction. To see how that can be, we have to solve the eigenvalue equation

from which

The result has eigenvector , just the axis of rotation, the others are That's why we couldn't see themthey're in complex three-space.

Exercise: check explicitly that these are indeed eigenvectors.

In fact, these eigenvectors correspond to the states of a spin one particle in quantum mechanics, and they are also relevant in describing circularly polarized light, as we shall see.

Dot Product

The scalar or dot product of two vectors is

using the dummy suffix notation.

Note that the property guarantees that inner products, including vector length will be preserved under transformation to another orthogonal base.

Check this: write show

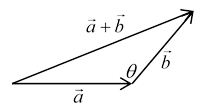

Evaluating makes clear that the dot product where is the angle between the vectors, also it’s evidently the component of one vector in the direction of the other, multiplied by that vector’s magnitude.

Cross Product, Levi-Civita Symbol, Triple Product

The vector or cross product of two vectors

This cross product is written more succinctly using the Levi-Civita symbol, .

Here the suffixes go over the values 1,2,3; and the counter clockwise and if any two suffixes are equal, .

Thus

( being summed-over dummy suffixes.)

This has a simple geometric interpretation: taking the two vectors to have a common end (say, the origin) see them as two adjacent sides of a parallelogram. The vector has magnitude equal to the area of this parallelogram, and direction perpendicular to the plane.

The sign has to be fixed by convention: it's defined to be the direction of progress of a right-handed corkscrew, the handle being rotated from to

Note that

The triple product is a scalar with magnitude equal to the volume of the parallelepiped having the three vectors as edges with their (non-arrowhead) ends at the same corner. Think of it as base area times height: the dot product of with equals the base area multiplied by the component of perpendicular to the base area planeand that’s the height of the parallelepiped.

An Important Identity

A triple product that often comes up in E&M is

This is easily proved using the important identity

This identity can be established by listing possible valuesthere aren't that many, remember the 's are zero if two suffixes are the same. Do it!

It's also worth thinking about the vector identity geometrically: must be in the plane, since it's perpendicular to so it's some linear combination of and it must be perpendicular to

What’s a Vector Field?

A vector field is generalization of an ordinary function .

An ordinary function assigns a number to each point in the space where it’s defined, for example a map of temperature over an area (or even a volume).

A vector field gives a vector for each point in some space, such as .

Exercise: sketch roughly the magnetic field for a small bar magnet. That’s a vector field: strictly, there’s an arrow at each point in space.

What we’ve called an ordinary function is often called a scalar function or scalar field, to contrast it with a vector field. The electrostatic potential in some volume is a scalar field.

The Gradient Operator

Definition

The gradient operator, called grad, is

To picture the gradient operator, we’ll look at a two-dimensional example: a contour map of a stretch of territory with mountains, etc.

The many lines on the map connecting points at the same height are called contours. Essentially, the map shows height as a function of latitude and longitude, we’ll use coordinates from some local origin, so the height is a scalar function .

Then is a vector field, giving, in fact, the local gradient in the usual geographic sense, that is, rate of change of height with respect to change in horizontal position.

If you take a step on this terrain your change in altitude is

Exercise: Check from the transformation laws given above that is invariant under rotations, and interpret your result. Now prove that taking a walk and coming back, .

Evidently, is a vector field in the two-dimensional space, and in fact it’s just the component of the gravitational field for motion confined to the surface (the other is cancelled by the normal force holding you to the surface), that is, is the work you do against gravity when you take the small step with horizontal components , this expression is the increase in your gravitational potential energy.

Equipotentials and the Gradient Operator

The lines of constant height on a geographical map, the contour lines mentioned above, are clearly gravitational equipotentials, it takes no work against gravity to walk around at constant height, your potential energy doesn’t change, so, as we've found, for a step along such a line we must have .

That is to say, the vector field is perpendicular to the equipotential lines everywhere, it’s pointing along the line of maximum slope (often called steepest descent). Analogously, the direction of the electric field at a point in an electrostatic problem is always perpendicular to the equipotential surface (this is three dimensions) through that point.

Exercise: (We'll be doing this in more detail later, this is to check you get the idea.) Sketch equipotentials and field lines for a system of two equal magnitude charges: same charge, opposite charge. In particular, sketch the lines near a saddle point: the midpoint between two like charges.

The Divergence Operator: Definitions, Gauss’ Theorem, Visualization

Definition

The divergence (called div) is formally defined as the differential operator acting on a vector field:

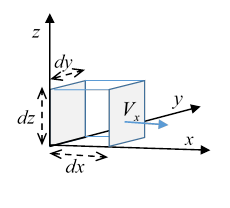

There’s another way to define divergence: take a tiny cubical box, sides around some point then

Here is a vector representing an increment of surface areaits magnitude is the area, its direction is perpendicular to the surface, but we have to specify which way, for a closed surface the convention is almost always outwards.

Let’s check that the two definitions are equivalent: consider first the contribution to the surface integral from the two faces perpendicular to the axis, they have areas of magnitude . In the limit of a small box, we can assume the variation of on these faces in the directions can be neglected, but the variation between the two faces because they are separated by is the only contribution to the integral, since the for the two faces are in opposite directions, .

Hence the net contribution to the integral over the cube surface from those faces is multiplied by the area , and the product of infinitesimals cancels with the denominator (in the expression for above) leaving just . The two other pairs of faces make corresponding contributions, establishing the equivalence of the two definitions.

Gauss’ Theorem

Gauss’ Theorem, a. k. a. the Divergence Theorem, is:

This easily follows from the “small box” definition of the divergence: divide the whole volume into tiny boxes, so the volume integral is a surface integral over these myriads of surfaces. But the integrals over the internal surfaces all cancel, leaving just that over the bounding surface.

Visualizing Divergence: Velocity Field for an Incompressible Fluid

To picture what the divergence of a vector field can look like, it’s helpful to visualize the flow of an incompressible fluid, with the velocity vector field Let’s assume at the moment that it’s a steady flow, not varying in time. Obviously, if the fluid is incompressible, it can’t be piling up anywhere, and we’ll assume there are no bubbles, it fills all the space we’re considering. (We’re taking the density of the fluid to be , so the velocity field is the same as mass flowremember the fluid is incompressible.)

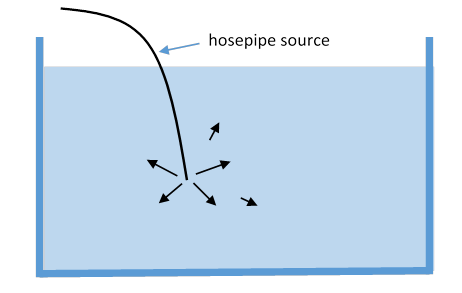

Then, from the small cube picture, as much is flowing into any space as is flowing out, everywhere. How do we change that? By having a source of fluid.

Consider a large body of water, like a deep large pool, and slip a thin hosepipe in, so the end is deep in the water somewhere. Now pump in water at a steady rate. Once things have settled down, if you imagine any surface within the water that encloses the source, over that surface must equal the rate of supply of water, call it .

If the water is at rest apart from the motion caused by this supply, will point outwards symmetrically from the source, and the surface integral, now taken over a sphere, will tell us that . Furthermore, is zero everywhere except at the source, where it’s evidently large. (We’re assuming the walls are far away and we are ignoring them.)

This is of course the pattern of the electric field from a localized charge distribution. We see that at the hose is the local rate of water supply, integrating over the finite volume of the hose nozzle gives the total supply. Similarly, the divergence of the electric field gives the local charge density: the first of Maxwell’s equations is

A negative charge, in our watery analogy, is like a hosepipe with water being pumped away: imagine the flow field for two hoses not far from each other, one supplying water, the other taking it away. This is like two opposite charges.

A truly point charge has its problems, mathematically and physically, which we’ll discuss later. What about a uniformly charged (throughout its volume) sphere? That has uniform nonzero divergence inside it, and it’s an easy exercise using the divergence theorem to find that the spherically symmetric electric field increases linearly from zero at the center, to the sphere’s surface, then falls off as the inverse square. (This is the same as the gravitational field inside and outside the Earth, apart from sign, and assuming a uniform-density Earth.)

Variations of Gauss’ Theorem

Consider the abstract vector field where is a constant vector, and a scalar function of position. Gauss' theorem for this field is

Exercise: Think about as the local pressure in a fluid at rest under gravity. Take some volume completely inside the fluid, and interpret the equation physically.

More variations: writing Gauss’ theorem as

where is the unit vector pointing outwards, we see that we can formally replace the by a tensor or etc., to get

because this is just the divergence theorem repeated three times, for

The Curl Operator: Definitions, Stokes’ Theorem, Visualizations

Definition

The curl is:

This rather cumbersome expression is more succinctly written using the Levi-Civita symbol, introduced earlier. Recall, the suffixes go over the values 1,2,3; and the counter clockwise and if any two suffixes are equal, .

So switching from to 1,2,3,

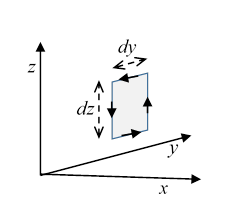

And, just as for the div, there’s an integral way to express this:

To find the component of the curl in a particular direction, we take a small square contour perpendicular to that direction (so its area is in that direction) and find the limit of a line integral of the vector field around that contour as the contour size goes to zero, then divide the result by the area of the square.

So for the component,

The argument is closely parallel to that for the divergence, now we take opposite sides together, they nearly cancel, etc.: left as an exercise for the reader.

Stokes’ Theorem

This definition leads easily to Stokes’ Theorem relating an integral over an open surface to an integral around its boundary:

Now we divide the surface into many small squares, taking the sum of the line integrals around all of them, all the interior parts cancel in pairs, leaving the integral around the perimeter, the curve , the edge of the surface .

The Electrostatic Field Has Zero Curl

Evidently, since is the change in potential on going around a closed circuit, zero, an electrostatic field from a point charge has zero curl everywhere, and therefore so does any other electrostatic field, since experimentally it’s found that electric fields just add linearly.

This is really equivalent to saying that any electrostatic field is the gradient of a single-valued potential,

it’s easy to see that from the antisymmetry .

(Nitpicking note: We’ll soon see that zero curl only necessarily means the field is a gradient if the space is simply connected.)

Visualizing Curly Fields: A Whirlpool in Incompressible Fluid and its Magnetic Analog

From this picture of the curl as the limit of an integral around a small contour, divided by the area enclosed by the contour, can that be visualized as the flow field of some incompressible fluid?

(The motivation here is visualizing the magnetostatic (time-independent) field, for which Maxwell’s second equation, assures us a sourceless incompressible fluid scenario will be valid) Furthermore, for static fields we have , being the current density.)

Consider the velocity field from a whirlpool. We’ll just keep to two dimensions for simplicity, looking at the surface, say of an emptying bathtub over the drain, or a hurricane viewed from a satellite. Clearly now for a circle centered at the origin, there's a whirlpool. In fact, for an incompressible inviscid fluid in steady rotational motion of this type, Kelvin proved that the integral

a path-independent constant for any contour including the origin. is called the circulation. It follows that the integral is zero if the closed contour does not include the origin, and that means for this flow except at the origin, where it is a two-dimensional delta function of strength

In fact, this velocity field is the same as the magnetic field from a steady current in a long straight wire: that goes down as with distance from the wire.

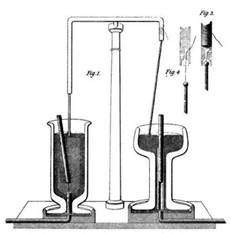

Obviously, this field cannot be represented as the gradient of a single-valued potential: if we had a magnetic monopole, and allow it to move on a circular track around the wire, it would circle around with more and more energy! Actually that scenario is essentially equivalent to one of Faraday’s first electric motors: (the one on the right in the illustration) he had a long vertical bar magnet, the north pole above the surface of a pool of mercury, the south pole far below, and a current-carrying wire suspended from a point vertically above the north pole, its end in the mercury. The wire rotated in the mercury.

Exercise: Where is the energy to keep the wire rotating coming from? And, what’s going on on the left side of the illustration?

Evidently, although everywhere outside the wire, this doesn’t guarantee that for some scalar potential, obviously it isn’t.

Contrasting Different Curly Vector Fields: The Whirlpool and The Rotating Solid Body

Let’s think a bit more about the curl of the velocity field of a whirlpool: all the velocity lines move in circles, it looks a bit curly. But to check, take a small contour between two adjacent circles, with radial ends, which won’t contribute to the integral. Around the curved sides, the speed goes as , but the length of contour goes as , so the two contributions to the contour integral from the curved sides cancel, meaning this flow field has zero curl (except at the central point).

Now contrast this with the velocity field of a rotating solid body: taking the same little contour, the contributions from the curved sides, using , will be

from which , where is a unit vector along the axis of rotation: the velocity curl has uniform magnitude throughout the rotating body.

In fact, this uniform curl describes the magnetic field inside a thick wire carrying a current, provided the current density is uniform over the whole cross-section.

A useful way to see the difference between these two circulating fields (whirlpool and rotating body) is to visualize the motion of a small piece of paper floating on the fluid surface. The rotation of the piece of paper measures the local curl of the velocity field. For the rotating body, the piece of paper will obviously rotate at the same rate as the body. Going around the whirlpool, though, it won’t rotate at all because the fluid adjacent to its inner edge is moving faster, and this rotates the paper relative to a fixed direction just enough to compensate for its rotation from the circular motion around the whirlpool center.

When Does Zero Curl Mean the Field is a Gradient?

Now for any scalar function of position. Does this mean that for a vector field if there must be some function such that ? A possible proof is given by writing This looks independent of the path, because if you choose two different paths, the difference between them is and we know

But there’s a catch!

This only works in a simply connected space. Otherwise, you can’t put a surface spanning the two paths. For example, in an annular region, a whirlpool flow has zero curl, and in fact the velocity looks like but is not single valued, so it’s not a potential in the usual sense.

Similarly, if does this mean there’s a vector potential function such that ? Again, the answer is yes, but with topological reservations, as will be made clear below.

In practice, the important application is to the magnetic field: since we have Griffiths has a long proof that includes working through two exercises. In any case, the result is a special case of Helmholtz’ theorem, which we shall go through in detail soon.

An interesting generalization of the vector potential is to try to construct if for a magnetic monopole, known as a Dirac monopole, predicted by some field theories, but so far undetected. We’ll examine that in detail later.

The Laplacian

One final operator that’s ubiquitous in electrostatics is the Laplacian

Most of electrostatics, in fact, is solutions of something, with various boundary conditions. You'll be seeing it a lot.

Fourier Transforms and Dirac’s Delta Function

Definition of Fourier Transform

(Note: for Fourier Series, see the basic review in my quantum notes here.)

First, in one dimension: a “reasonably smooth” function that goes to zero as can be expressed as a sum over plane waves,

where

(Of course, we have to show these equations are consistent! We will.)

In three dimensions, the plane wave expression is from which, dealing for the moment with space and time separately, the standard notation is

and the inverse transforms have the obvious sign changes (and no denominators).

Fourier Transforms of Differential Operators

E&M is full of differential equations, and Fourier transforms are an important tool in finding solutions, for the simple reason that differentiation just becomes multiplication! Let’s see how this works.

If the Fourier transform of is that of is Similarly, if the (three-dimensional) transform of is so then that of is and that of is

Important Exercise: Check these results by just differentiatingof course, we're assuming the integrals still converge, in practice, this is rarely a problem.)

Consistency of the Fourier Transform Equations: Introducing the Delta Function

It’s now time to ask about the consistency of the first two equations:

Feeding the second into the first we have

Remember this is a completely arbitrary function (well, differentiable) and ask how the first term can always equal the last term. It must be that the expression in the square brackets, which will clearly have to be expressed as the limit of some slightly better-defined sequence of integrals, picks out only the bit of at How can that be? Let’s do the integral, putting in gentle cutoffs to make it well-defined:

These are convergent integrals, and the result is called Dirac’s delta function,

For nonzero we see that this is a peaked function with height and width and total area equal to one (an elementary integral). As it becomes infinitely localized. This limiting case is not a function in the traditional sense (meaning something that gives a definite value at each point in its range), despite its name, but it has a well-defined meaning provided it is in an integrand. Mathematicians call it a distribution, it has all its weight at one point. It would be difficult to do physics without the delta function, and physicists don’t insist that it always be in an integrand, as you will find. For example, it’s convenient to refer to a point charge as a delta function charge distribution.

Fourier Transform of Spherically Symmetrical Functions, and Specifically 1/r.

For a spherically symmetric function the Fourier transform

Hence for

Writing to make the integral converge (then we’ll take the limit ) ,

To check consistency, going the other way, we replace with :

Now in that last integral write and find independent of . To evaluate this integral, note that it is even, and half of the imaginary part of taken along the real axis. It’s OK to close in the upper half plane, the only contributor is the pole at the origin, so

Similarly, if then You’ll need these results in this course.

Note: if you're not familiar with integrals in the complex plane, check my quantum notes here, and perhaps also the preceding quantum lecture.